Jessica is our Director of Engineering and cares passionately about making sure GenUI is the best place to work for people who want to build software.

Updated Oct 14, 2020

It’s really hard to define success for a large, dynamic group of creatives busy solving complex problems every day. Each software project, team, and client is different and requires a tailored approach. At GenUI, we found the need for a performance process worthy of the talent at our company. Short of creating a set of per-person criteria, we focus on activities that drive the right results in a creative building process.

Performance reviews are hard: at large companies, I felt the criteria only had relevance during the review, and the evaluation approach one-size-fits-all instead of personalized. At smaller companies, reviews came irregularly or not, and I missed substantive discussions about my performance.

When I started at GenUI, it was inspiring to discover a company that walks its talk as a culture-strong organization. The company is also the ideal size for building a review process to not only fit the team but encourage and highlight behaviors that:

When we began creating our review process, I quickly realized that rooting a company’s performance reviews in its values strengthens the culture. Yet we didn’t find a workflow that matched our value set during preliminary research of existing performance review systems. That’s why our process and custom performance management system grew out of MIT’s Human Resources department best practices. The result is a unique process that reflects our company’s character, and we are proud of that.

Of course, we will keep iterating, but want to share our essential three practices that we designed around to support our values.

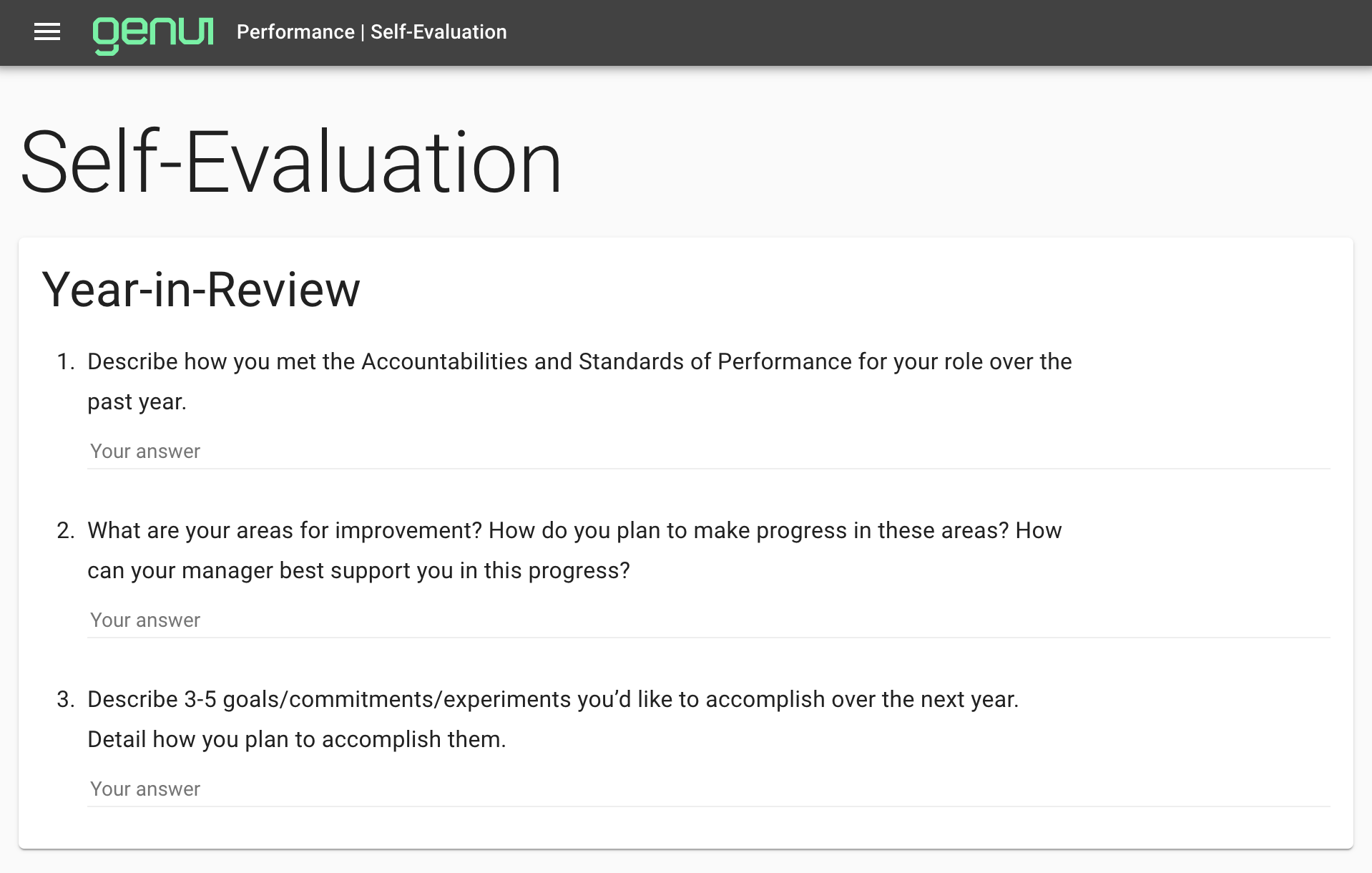

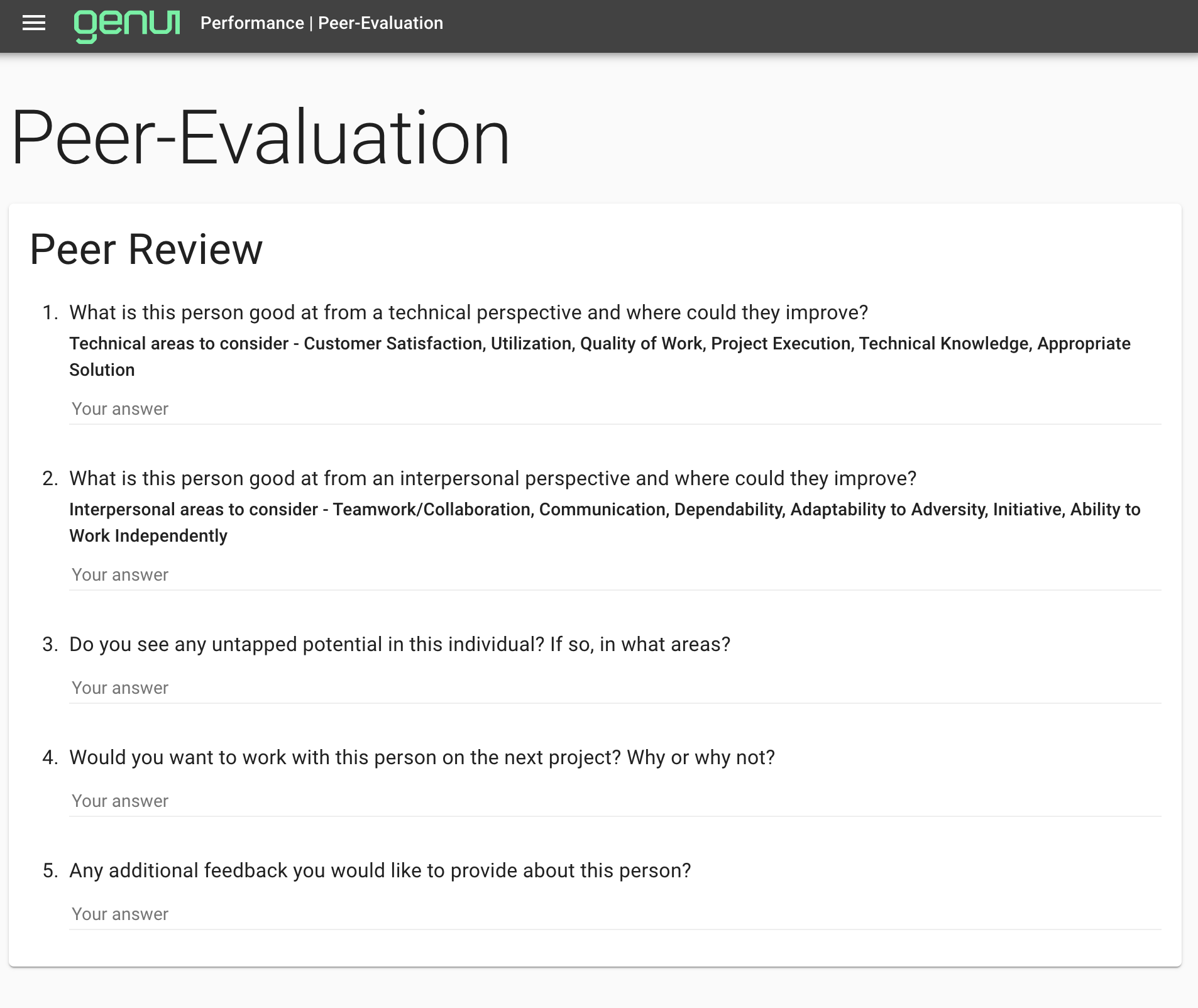

A performance review process is less biased when data arrives from multiple sources. GenUI’s data collection step includes three critical components: self-review, numerous peer reviews, and client feedback.

We send notes to all our clients to make sure that their feedback is reflected in the performance review, both what we're doing well and where we could improve.

Sourcing information from multiple perspectives allows for a better understanding of each individual’s performance. But there’s an additional benefit to increased accuracy. We are diverse human beings, so the way feedback is phrased often helps us interpret the message content. We also experience lightbulb moments of self-reflection when multiple voices form a consensus, even if each is stated differently. Lending various voices to a review process increases the chances that the feedback lands. Seeking open and honest feedback from everyone in the company remains a practice at GenUI, and we knew our reviews needed to reflect that same plurality of voices.

If you're in the tech industry, then you've likely seen performance "buckets" or received a numerical evaluation rating at some point. But did it help you grow?

Other than a checkbox for managers to say they rated their employees relative to each other, how much actionable value do such metrics provide?

Instead of a scale, we think of competencies on a continuum with limitless possibilities for improvement because we encourage everyone at our company to continue growing and learning in and outside of work.

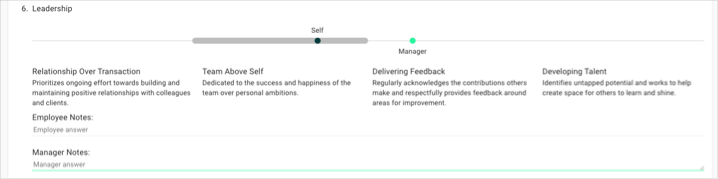

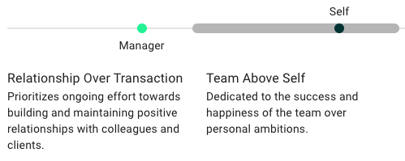

Our UI for this measure felt most natural as a continuous slider. The table-stakes sit on the left; these are behaviors we expect everyone to exhibit frequently. (Frankly, they're also part of our hiring criteria!)

As you move to the right, the behaviors become more advanced, with the farthest being the “black-belt” level. Each is tailored to our organization, and we revise them every year to make sure they represent a genuine continuum of behaviors that matter in our culture. We want to make sure we’re observing these behaviors; we think it’s important to be grounded in our efforts rather than aspirational.

Our process asks each employee to rate themselves on this slider. Then their manager rates them. Everyone on our team is encouraged to provide specific examples of a behavior corresponding with each slider rating. If there is a discrepancy between the manager and employee rating, it typically sparks a productive, honest conversation.

A visually intuitive continuum gives clear directional guidance on how to improve. In our performance conversations, we often talk about behaviors just to the right of where the employee is rated and try to develop specific plans around advancement to this achievable goal. These conversations invite openness through questions like: "What does this competency look like for you? Who do you think does this well at our company? What's a milestone or checkpoint for improving on this competency that we can agree on?"

Okay, but wait, does this GenUI performance tool only reinforce that everyone is doing well all the time?

No. We communicate expectations based on each employee's position in the company. Placement within the grey bar represents their position:

For each competency, an employee performs below, at, or above the expected range for their role and seniority. Conversations around each competency often start with where the rating falls. For a rating below the expected range, performance issues and expectations are clearly stated. With this method of rating, the path to improvement is clearly outlined. Our goal remains to support movement and progress rather than punishing a low score.

It may seem like a nit-picky UI element to offer a slider instead of a rating, but I think this distinguishes our tool. As one of our employees said to me during a recent review:

"Measurement tools like numbers or boxes evoke a negative feeling of exclusion. The: "I'm here.. I'm not there" mentality. With a slide tool, though, there's a fluidity that offers encouragement to explore and grow."

Behind the scenes, a number represents each team members’ performance for every competency. As managers, we look at the distribution of scores for each . We then check historically for each individual and the group’s collective performance year-over-year.

Last year, we found lower scores than we’d like in the leadership and growth competency numbers, specifically with "giving feedback" as the target behavior. With this data in mind, we created a training around increasing our direct communication. This year, we hope to see a numerical improvement in these two areas.

This works on an individual basis too; say someone set a goal to share their technical knowledge more often with the team and produced a lunchtime technical talk. The resulting peer feedback and corresponding competency rating should reflect this effort. Year-to-year visible progress then becomes intrinsically motivating.

Our company weighs technical and consulting skills equally when considering yearly performance. This more holistic competency data allows for a calculation of each employee’s position in our matrix while making the evaluation process more objective, repeatable, and fair.

At GenUI, we pride ourselves on creating a home for the best developers and an organization reinforcing behaviors that nurture a great work environment. We created a system that demystifies the review process for all involved and analyzes actions to promote fulfillment and collaboration. Our distinct culture then drives excellent results for clients.

In the spirit of software development, we continue to iterate on our performance tool to meet client challenges together as strong and self-aware individuals focused on technological growth and teamwork.

PS: We created the backend of our performance system in Elixir and couldn’t be more enamored with the language.

Can we help you apply these ideas on your project? Send us a message! You'll get to talk with our awesome delivery team on your very first call.